The Challenge

Mid-market employers had no self-serve way to manage jobs and campaign spend on Indeed. Most relied on account representatives or agencies to plan budgets, structure campaigns, and optimize performance. Campaign management was manual, dependent on human judgment, experience, and constant oversight.

This made it difficult to scale, inconsistent across accounts, and time-intensive for both employers and internal teams.

The opportunity was to introduce AI-driven automation that could evaluate job performance, recommend how budget should be allocated, and continuously adjust campaigns based on real results. The risk was significant. If the system did not produce strong outcomes or align with employer expectations, it would not be trusted or adopted.

Approach

Build, observe, adapt

Rather than defining the system upfront and validating later, we moved to a real product early. Design and engineering worked together in code, allowing ideas to be tested immediately in real scenarios with real campaign data.

The key deciding factor was not how the system looked. It was how well it performed.

Research and product development ran in parallel, so decisions were shaped by real employer behavior, campaign performance, and market conditions, not assumptions.

Defining the Optimization System

Early concepts were not just about interface, but about how the system should make decisions.

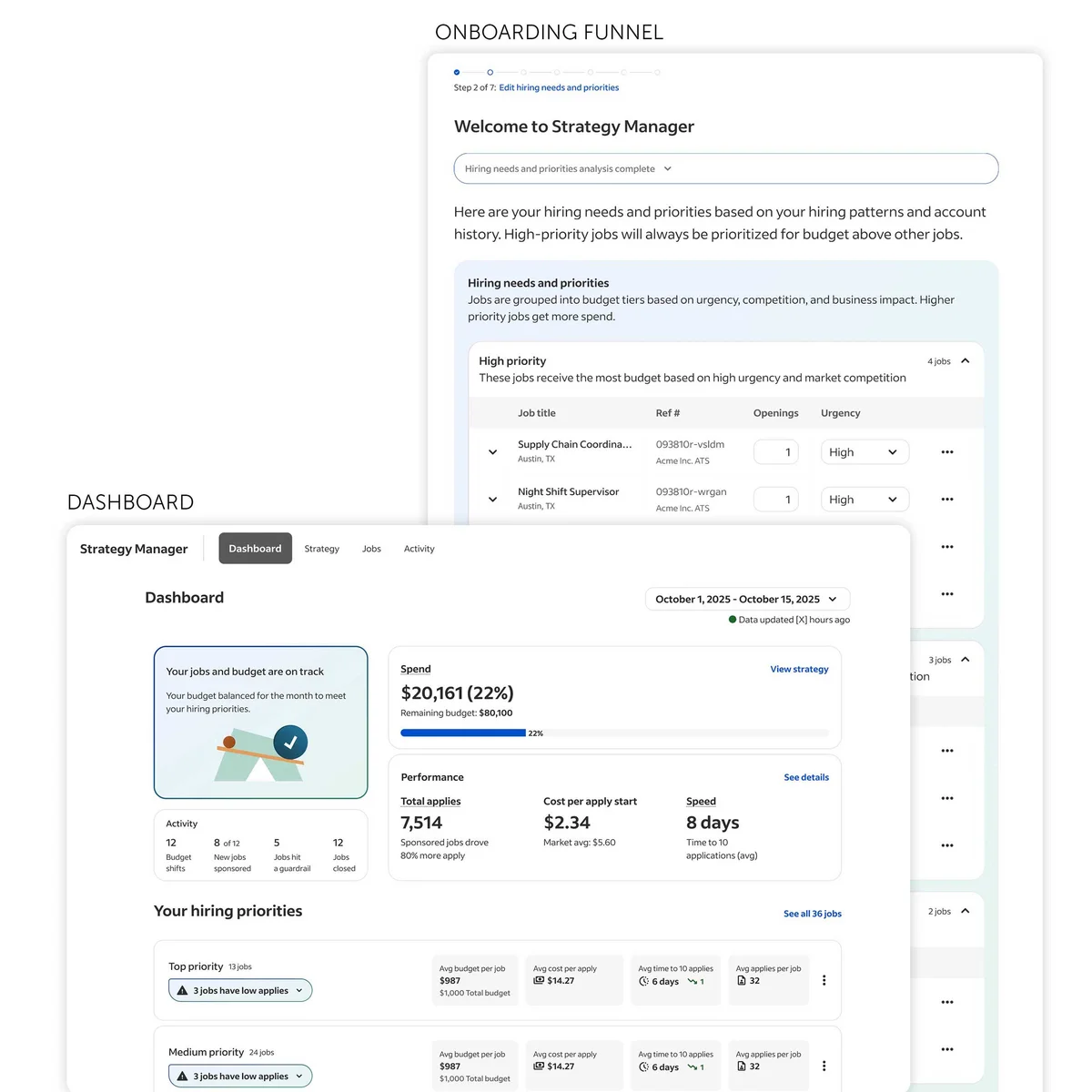

The core question was how AI could plan and manage campaign spend in a way that outperformed manual decision-making. The system needed to evaluate job performance and market conditions, determine how budget should be allocated across jobs, structure campaigns to maximize applies, and continuously adjust spend as performance changed over time.

Rather than defining this logic in theory, it was brought into a real product early.

What this revealed was critical. Performance quality mattered more than automation itself, and employers needed to understand how decisions were being made. Recommendations had to reflect real constraints and business goals, and the system needed to continuously adapt rather than rely on static plans. These insights only emerged through real campaign data and real employer interaction.

Evolving from Manual Process to Decision System

As the product matured, it became clear this was not simply introducing automation.

It evolved into a system that planned campaign strategy, allocated and adjusted spend, evaluated performance over time, and continuously adapted decisions based on results.

This shifted the experience from manual campaign management to AI-driven performance optimization. The role of the interface evolved alongside it, making system decisions understandable, providing clear rationale behind recommendations, and allowing employers to guide, adjust, and override decisions when needed.

This was not just about control. It was about aligning system behavior with how employers think about performance and outcomes.

Designing for Real-World Constraints

Campaign performance is not only a system problem. It is a business problem. Employers have different goals, constraints, and expectations around spend, performance, and outcomes.

To work effectively, the system needed to reflect employer priorities, operate within real budget constraints, and adapt to varying job performance and market conditions while balancing system optimization with employer control.

Working with real campaign data exposed the gap between ideal system behavior and real-world needs, allowing those gaps to be addressed before scaling.

Validating Through Real Performance

Validation was grounded in real campaign data and real employer workflows. The goal was not to test ideal recommendations, but to understand whether the system improved performance, whether budget decisions were effective, and whether employers trusted and could act on the system’s output.

What emerged was clear. Performance outcomes determined trust more than interface design. Employers needed clear reasoning behind spend decisions, and systems that continuously adjusted based on performance consistently outperformed static approaches.

By validating against real outcomes, decisions were made earlier and with greater confidence.

Impact

AI Job Campaign Manager validated a new model for campaign management. It shifted campaign strategy from manual decision-making to AI-driven optimization, enabling dynamic budget allocation based on real performance data.

It introduced systems that continuously evaluate and adjust campaign strategy, established patterns for transparent and controllable AI decision-making, and improved alignment between system recommendations and employer goals.

By validating performance early, it also reduced product and technical risk before scaling investment.

Key Contributions that Shaped the Outcome

We defined a new model for AI-driven campaign optimization, shaping how systems evaluate performance, allocate spend, and continuously refine strategy.

We validated decision-making using real performance data, ensuring the system produced meaningful, effective outcomes.

We aligned system behavior with employer needs by incorporating real goals, constraints, and preferences into how decisions were made.

We designed for trust and control, making system behavior understandable and adjustable so employers could confidently rely on automation.

We also established patterns for adaptive AI systems that continuously evaluate, adjust, and improve over time.

What We Delivered

We delivered early validation of an AI-driven campaign management system before broad rollout, along with a real, testable product used to evaluate performance and employer interaction in live scenarios.

The system plans, allocates, and optimizes campaign spend based on real data, continuously adjusting to maximize job applies.

This work established foundational patterns for integrating AI into high-stakes, performance-driven decision-making workflows.

Why Our Approach Matters

The success of this product depended on the quality of its decisions. That could not be validated through static designs.

The system needed to be tested against real campaign performance, budget constraints, and changing market conditions.

Validation in real campaign environments made it possible to understand not just how the system behaved, but whether it made effective decisions over time.

Trust, control, and usability were shaped through real performance, ensuring employers could rely on the system to manage spend and improve outcomes.